Wrap-up

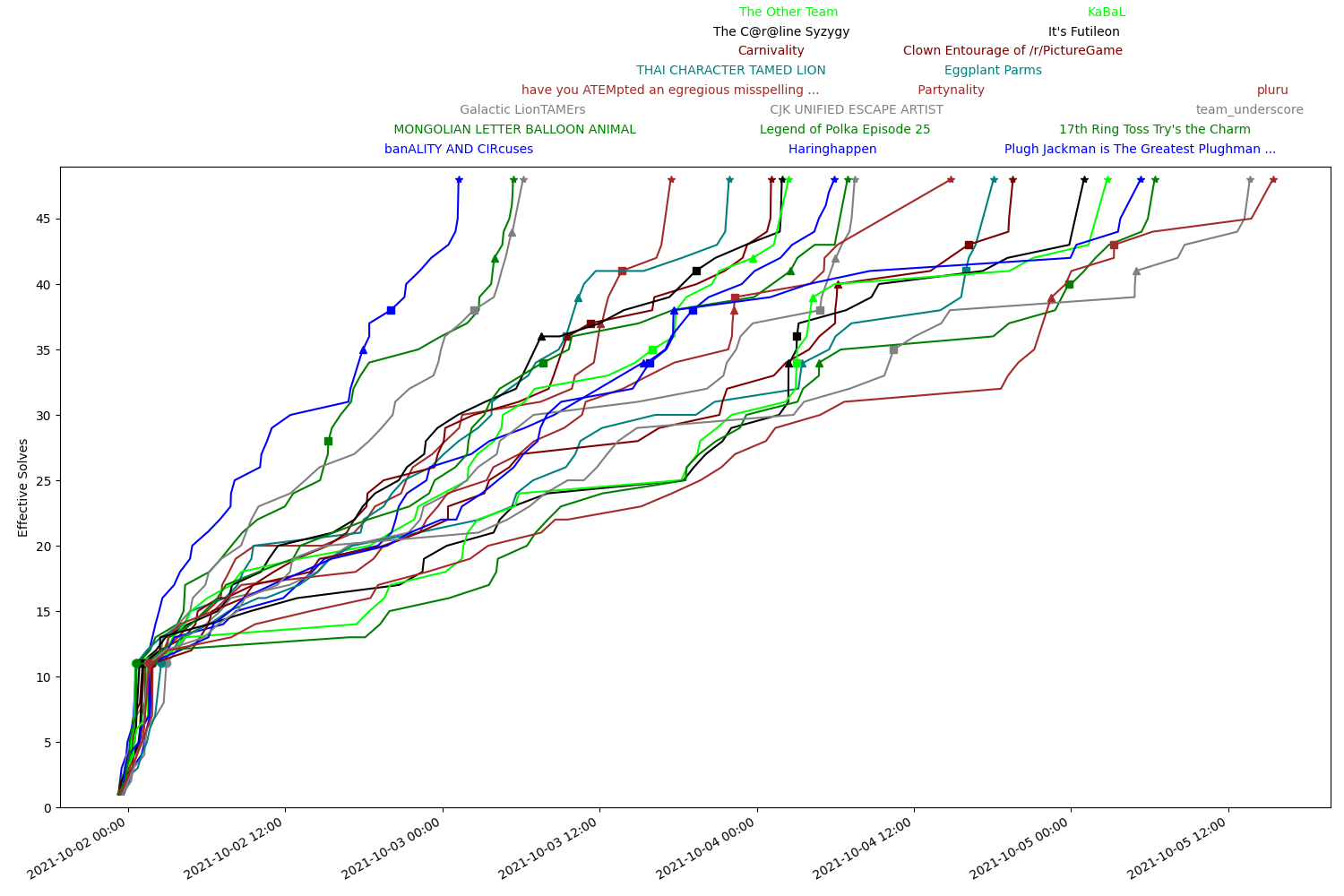

Congratulations to banALITY AND CIRcuses for being the first to help Matt & Emma get FOURTEEN YEARS BAD LUCK and reunite, finishing the hunt in just over 26 hours.

We'd like to thank all the carnival attendees for solving Teammate Hunt 2021. In total, 384 teams solved at least 1 puzzle, 271 teams finished the Magical and Entanglement metas, 190 teams solved at least one pair of entangled puzzles, and 67 teams finished the puzzlehunt. We'd also like to shout out the following teams:

- The Other Team for getting the first solve on Magical and Entanglement

- ᢇ MONGOLIAN LETTER BALLOON ANIMAL for getting the first solve on The Mystical Plaza

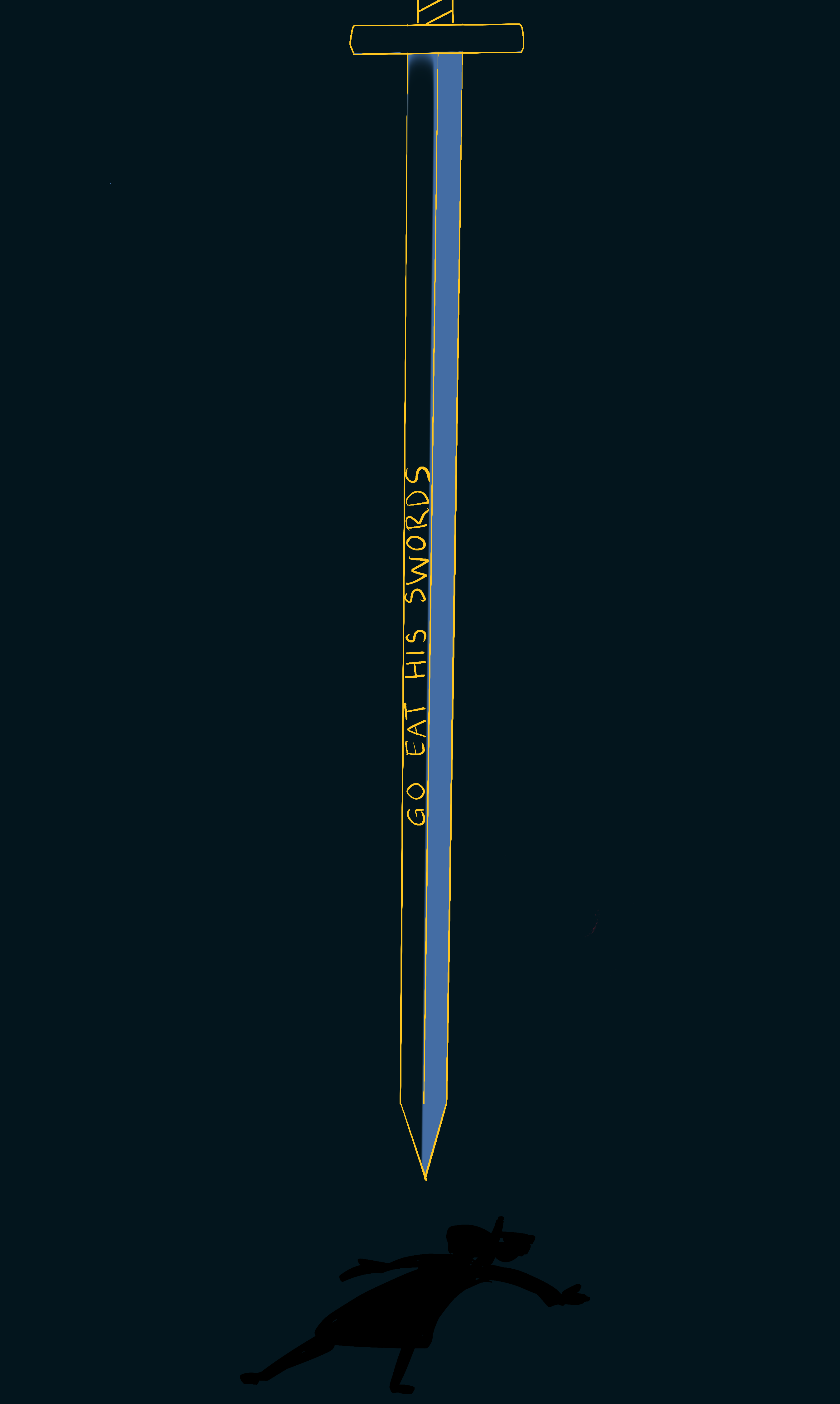

- banALITY AND CIRcuses for getting the first solve on The Sword Swallowers

- Lithium Triglycerides for being the last team to finish (5 minutes before hunt end!)

- Send In The Llamas for solving all puzzles with the fewest incorrect guesses (11)

Theme & Story

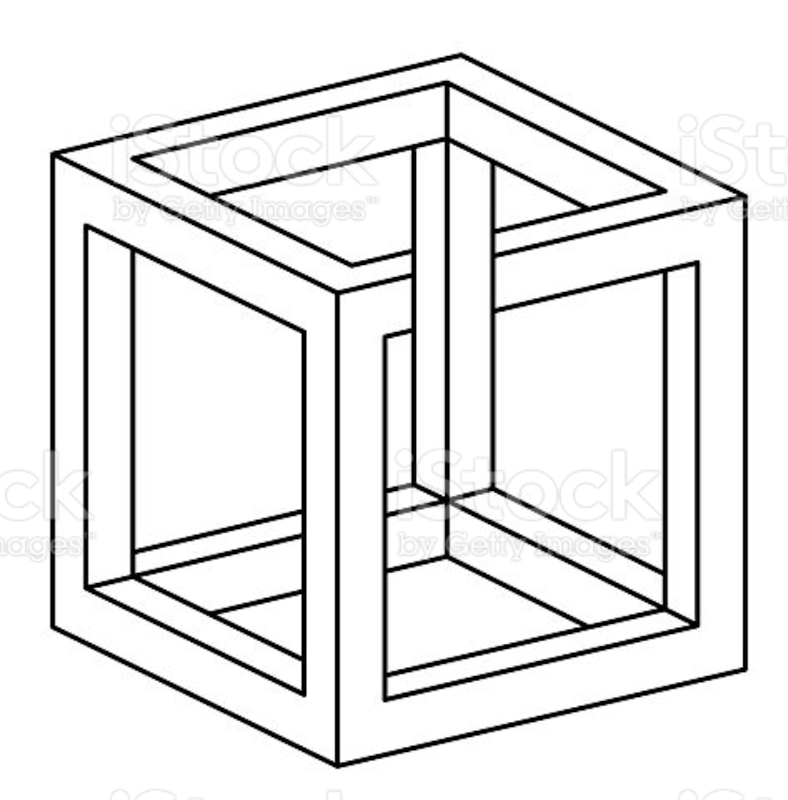

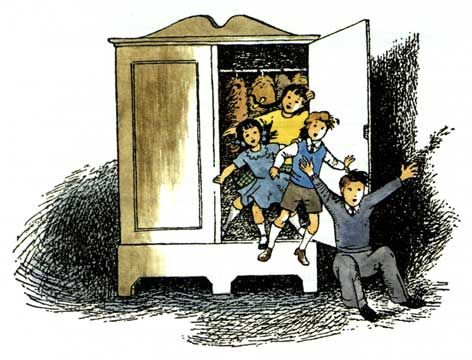

During Matt & Emma's Carnival Conundrum, a traveling carnival arrives in town. Matt and Emma spend the day delighting in activities at the carnival (i.e. puzzles) before being invited by the Magician to participate in the grand finale of his magic show: a disappearing act. Matt and Emma each step into a box, and the magic begins.

Upon exiting their boxes, each twin finds themselves alone in a strange dark dimension. Nobody else at the carnival seems to remember the existence of their missing twin at all, and the Magician slips away. Matt and Emma must now travel through the carnival to chase down and confront the Magician. During their journey, they encounter unusual anomalies: puzzles that are entangled across dimensions, which Matt and Emma work together to solve.

After defeating the Magician in their respective dimensions, Matt and Emma arrive at the Hall of Mirrors. They each break through a mirror, tumbling back into their home dimension. When they look back, the carnival has disappeared.

Writing Goals & Timeline

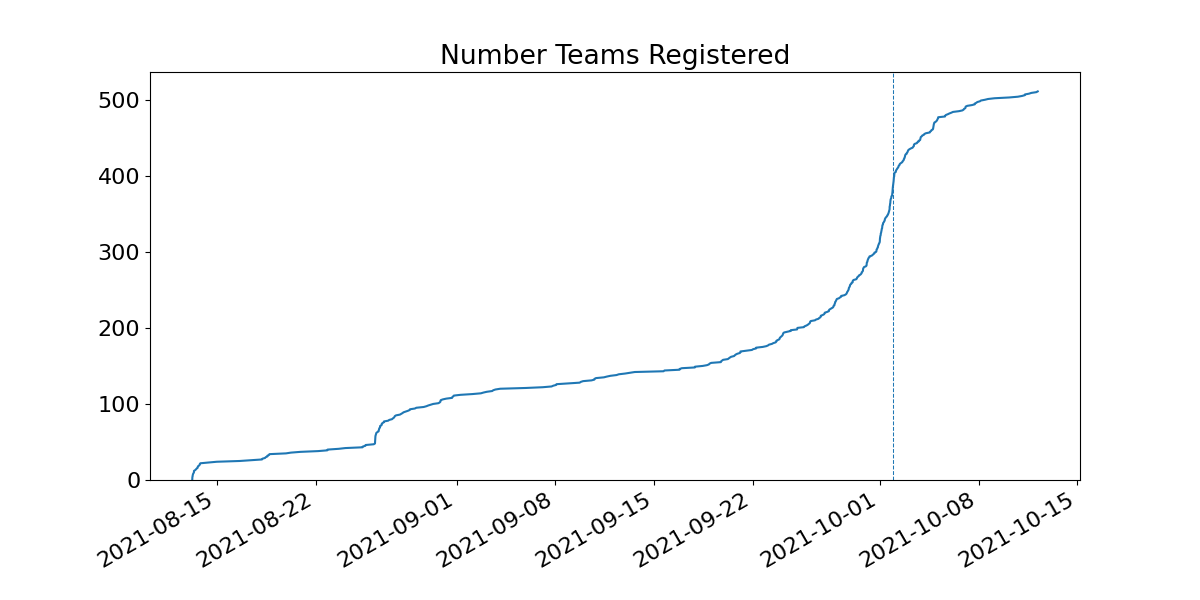

Hunt planning started in early March, with a planned release date of early October. Last year, we started in February and ran in late October, but there were reasons we believed we could write in a shorter timeline. Much of the website code could be reused, and we planned to write a shorter hunt than last year, about 30-40 puzzles (compared to the 45 puzzles + games written last year).

We aimed to write less tech-intensive puzzles (nothing as detailed as Playmate), and made sure ambitious theme proposals were better-scoped. In practice, lots of people wrote tech-intensive puzzles anyways, and due to scope creep, we wrote more puzzles than last year. We initially estimated entangled puzzle pairs as 1.5 puzzles worth of effort. Once we started building them, it became clear that was very incorrect.

By the summer, we were behind our timeline. Puzzle writing motivation was lower this year, possibly because Teammate Hunt 2020 writing coincided with initial lockdowns from COVID-19, whereas Teammate Hunt 2021 writing coincided with vaccinations and loosening of in-person restrictions. Based on progress made, we gave serious thought to delaying the hunt until 2022, but after a team survey we decided to commit to an October 1 deadline.

Overall we've learned that:

- We're a tech-happy team that wants to write interactive puzzles, even though they tend to take longer to construct.

- We love to set high bars and make work for ourselves.

Theme Selection

Theme selection was done via "pitch" docs. People interested in a theme wrote a short few-page theme proposal, describing the plot and hunt structure. These themes were circulated for a few weeks to get feedback, then pitched during a team meeting in late March. After hearing all proposals, we voted for themes on a scale from 1 to 5, with a run-off between the top two themes. In general, proposals this year had a greater emphasis on story, since this was somewhat lacking in our previous hunt.

The carnival theme that was pitched more or less matched the end result, including the magic act, entangled puzzles, parallel “dark dimension” maps, and the hall of mirrors finale. Some of the goals of this proposal included having a simple story, with just a few plot points that were each tightly integrated with the puzzles and structure. The carnival theme won the run-off election by a single vote. We won't share the other themes, in case we use them in the future.

Adventures In Narrative Design

After we decided on our theme and plot outline, we had to decide how to actually present and write it. Since we only had a few (but very important) plot points, we wanted a delivery method that would be more engaging and impactful than a block of text. After discussing a few options, including video updates, we landed on illustrations with the goal of telling our story as visually as possible. Our original plan was to only have story art for the metas, which coincided with plot points in the story, but early on in the process Rachel (our story artist) introduced the idea of having a piece of story art for every puzzle in the hunt. The hope was that this would keep the story in people’s minds even as they were solving puzzles, providing continuity between story updates.

We loved the idea of not having any dialogue in our story at all, but were worried about clearly communicating the plot point of Matt and Emma’s separation. This was of particular concern due to the more abstract direction the story art took. We’re happy where it ended up, with the magician having only a few lines of dialogue in only the post-disappearance story card.

While there was a lot of debate and discussion internally, we’re glad that our work paid off - the art and story appear to have been a hit. While we consider our story this hunt to be an overwhelming success, we’re excited to try new formats and ideas for future puzzlehunts!

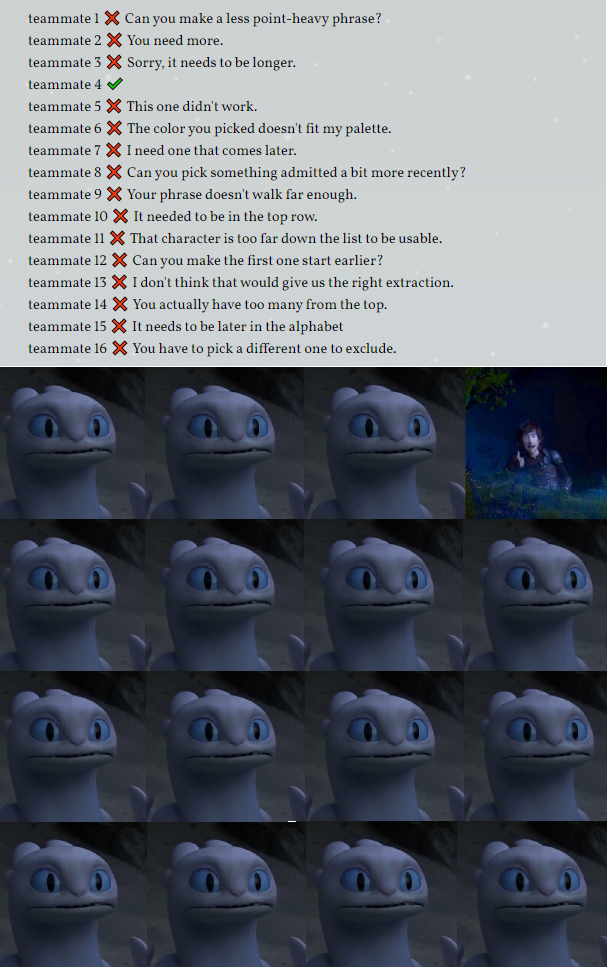

Entanglement

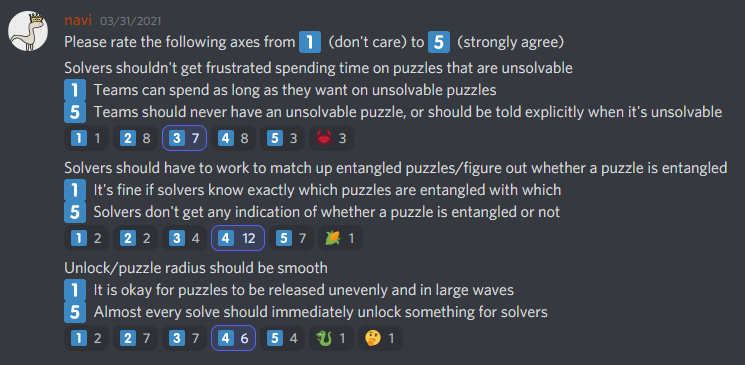

The core mechanic of Act II, entanglement, linked together pairs of puzzles from each of Matt and Emma’s dimensions. There were many ways this could be implemented, so shortly after the theme was decided, we ran a survey to gauge opinions on structure.

We had disagreeing opinions on many aspects of entanglement, except one: we strongly felt that solvers should work to determine how puzzles were entangled, or if they were entangled at all.

This led to the following sets of goals:

- Variety - the way in which entanglement occurs should be different. Some puzzles used cluephrases (Menagerial Problems / Pumpkin Patch), some used matching counts (Light Show / Tumbled Tower), some used structural similarity (Quick Response / Single Elimination), some used interactivity (Remember to Hydrate! / Mystery Manor), and some used a combination of many things like flavor, cluephrases, and structure (Contortionist’s Act / Human Pyramid).

- Independence - both sides should be independent puzzles, rather than one puzzle just using the other for extraction. In general, they should have useful things to work on prior to realizing entanglement.

- Intertwined - it should be impossible to solve an entangled puzzle by itself, such that solvers never need to check solved puzzles to find pairs.

We feel we hit these goals, aside from a few intentional exceptions like Remember to Hydrate!

Puzzle entanglement is not an entirely novel mechanic. During puzzle writing, we discussed times it appeared in prior hunts, like (2018 hunt) It Takes Two, (2016 hunt) mezzacotta's Day 4 puzzles, and (2009 hunt) the Reverse Dimension round from MIT Mystery Hunt 2009. We thought these were far enough back in time that it was okay to revisit the idea, and our design of never clearly identifying single puzzles vs puzzle pairs would make it feel different.

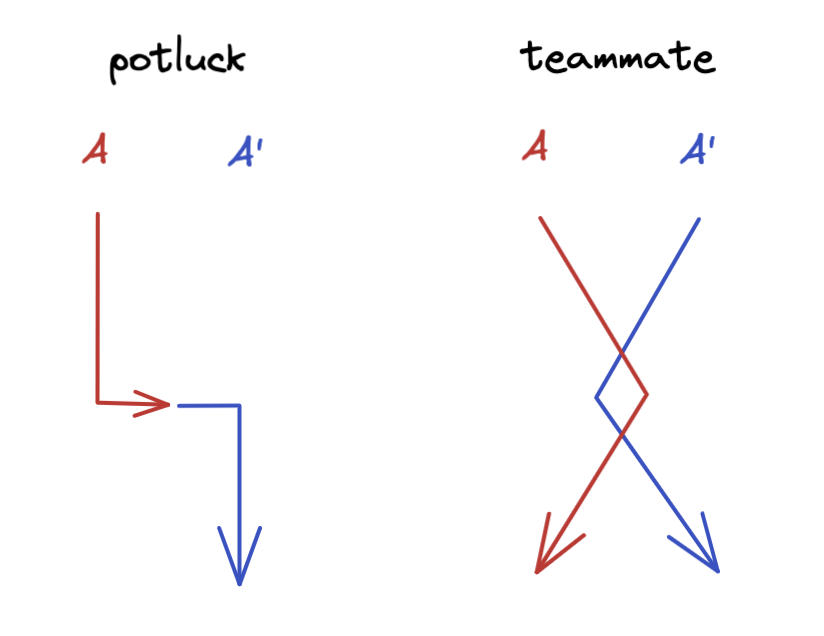

When (2021 hunt) Puzzle Potluck 4 used a similar mechanic, we panicked initially, but decided to proceed for the following reasons:

- That hunt clearly indicated that puzzles needed to be paired, and every puzzle would be paired; in contrast, ours left the realization and puzzle matching up to the solvers to discover.

- In that hunt, the flow of many puzzle pairs was to complete one half, then carry it to another half to finish, getting a single answer. Many 1st halves could be IDed just by how approachable the puzzle was with no extra info. Our "Independence" goal led to a different solve flow, by increasing the work needed to ID pairings.

- We were well past the point of no return (all metas written, 80% of puzzles in writing). Realistically we weren’t going to change course.

On the flip side, Puzzle Potluck 4 did prove it was possible to pair puzzles even when they all unlocked at once, and that the concept could be very well-executed. We’re grateful for their useful data point when deciding our unlock structure.

Unlock Structure

Our Act I unlocks were similar to last year. Teams started with 3 puzzles unlocked and unlocked 2 puzzles on the first solve, and 1 more for every subsequent solve. New this year were time unlocks. A team with 0 solves in Act I would see a new puzzle about once every 4 hours. The Magical / Entanglement metas were not time unlocked and required 6 solves to unlock.

Act II unlocks were significantly more complex. Early on, we decided on single track unlocks to ensure consistency in entangled puzzle unlocks. All Act II solves contributed equally to unlocks and all teams saw puzzles in the same order. Even with this constraint, there were many competing desires all in tension with one another:

- We wanted every puzzle to unlock another, but didn’t want teams pairing entangled puzzles based solely on what unlocked at the same time.

- We wanted entangled puzzles interspersed in Act II, but also wanted metas to unlock after their feeders; more entangled puzzles early meant the meta unlocked later.

- We didn’t want to flood solvers with puzzles, because we believed it would make it harder to pair puzzles, but we also wanted a higher width than last year, since our hunt was looking difficult and getting stuck on an entangled pair reduced a team's effective width by 2.

After many hours of discussion, we landed on the following.

- Teams start with 4 puzzles unlocked - 2 regular puzzles and 1 entangled pair. This small width would ideally force teams to look at Menagerial Problems / Pumpkin Patch, and introduce the idea that puzzles could be paired or unpaired.

- Half of the entangled pairs were interleaved with regular puzzles, and the other half unlocked towards the end. We liked how this ramped up to the Hall of Mirrors finale.

- Teams unlocked 1.33 puzzles/solve, increasing over hunt to 2 puzzles/solve to accommodate unlocking entangled pairs. Width grew from 4 puzzles to a max of 14 puzzles.

- Entangled puzzles unlocked at most 2 solves apart from each other. The first two pairs unlocked at the same time, and every future pair unlocked 1-2 solves apart. If an incomplete pair unlocked, it always unlocked with an unpaired puzzle to ensure effective width never went down.

- Metas only unlocked after all their feeders unlocked. Normal puzzle unlocks were biased towards the Matt round, which had easier feeders. This let us unlock all 9 Matt feeders earlier, which let us unlock The Mystical Plaza earlier.

- Specific numbers: The Mystical Plaza unlocked at 5/9 Matt solves and 12 Act II solves in total. The Sword Swallowers unlocked at 6/9 Emma solves and 17 Act II solves. All puzzles unlocked within 20 Act II solves. Hall of Mirrors unlocked after both The Mystical Plaza and The Sword Swallowers were solved, and at least 12/16 entangled puzzles were solved.

Based on the feedback form, teams generally did not feel bottlenecked and only a few teams felt they were flooded by puzzles. However, a number of teams commented on the gaps between puzzle halves. The main lesson we’ve learned is that "2 solves" can feel like a very long time, especially if a team spends a disproportionate amount of time on an incomplete pair they can’t finish*. Given a do-over, we would consider reducing the gap further or reducing the initial work doable on an incomplete half, among other options.

* As an exception, consider the Remember to Hydrate! / Mystery Manor pair, which also unlocked 2 solves apart. We hoped that a puzzle with nothing to do and an explicit timer would discourage solvers from spending too long on an unsolvable half, while building up the suspenseful reward of entanglement discovery. Thankfully, opinions on this pair were not so divisive.

Time Unlocks

Time unlocks did not continue into Act II, for a few reasons. First, we wanted to preserve the story beat of Matt and Emma getting separated after solving Magical / Entanglement. This made us hesitant to time unlock Act II before intro meta solve, and we were worried that solving the intro meta and seeing 10+ puzzles at once would be overwhelming. Secondly, we had carefully chosen the starting Act II puzzles to guide towards the entanglement break-in (see our reasoning above). We were worried that if a team had many puzzles open, they might not even see both sides of an entangled pair. A team without time unlocks does hit up to 14 open puzzles by the end, but it is impossible to reach that width without solving some entangled pairs, so we felt it was okay to expect teams to spend more time divining pairs towards the end.

Instead of time unlock, we leaned on providing additional hints and the hint follow-up system. While this doesn't help teams hesitant about using hints, we think it was the correct choice this year given the overall structure of the hunt.

Testsolving

We once again used Puzzlord to manage puzzle creation. To test pairing entangled puzzles, we came up with a faction system. At the start of puzzle writing, authors self-assigned themselves into 4 factions. Every puzzle was assigned a faction, and no one in that faction could be spoiled on that puzzle. (So for example, a puzzle with authors in factions 1, 3, 4 would be assigned to faction 2, and could only be testsolved by people who weren’t in faction 2.) During individual testsolving, entangled pairs were tested assuming solvers knew the specific pairings.

After passing individual testsolving, we then ran batch testsolves. On four different weekends, faction N members tested all faction N puzzles in one big session. The primary goal was to verify that a team spoiled on entanglement could successfully pair puzzles among a wide set of alternatives. These were reasonably effective; however, due to our batching, we did miss a few red herrings, such as the seven masks in both Quick Response and Human Pyramid.

As for testing entanglement as a whole, we ran two full hunt testsolves. We used these testsolves to tune the unlock structure and the initial four Act II puzzles, including Menagerial Problems / Pumpkin Patch (the intended break-in for entanglement). We also ran one testsolve with newer solvers to test the Act I puzzles up to the Magical and Entanglement metas. This was very helpful in tuning Act I to be more beginner-friendly, and it’s thanks to this testsolve that Entanglement has the name it has! (The initial meta used to be named Entrancement, but when the testsolve group misread it as Entanglement, we realized there was no reason we couldn’t name it Entanglement to foreshadow the upcoming Act II mechanic.) Many thanks to our testsolvers for volunteering!

Technical Details

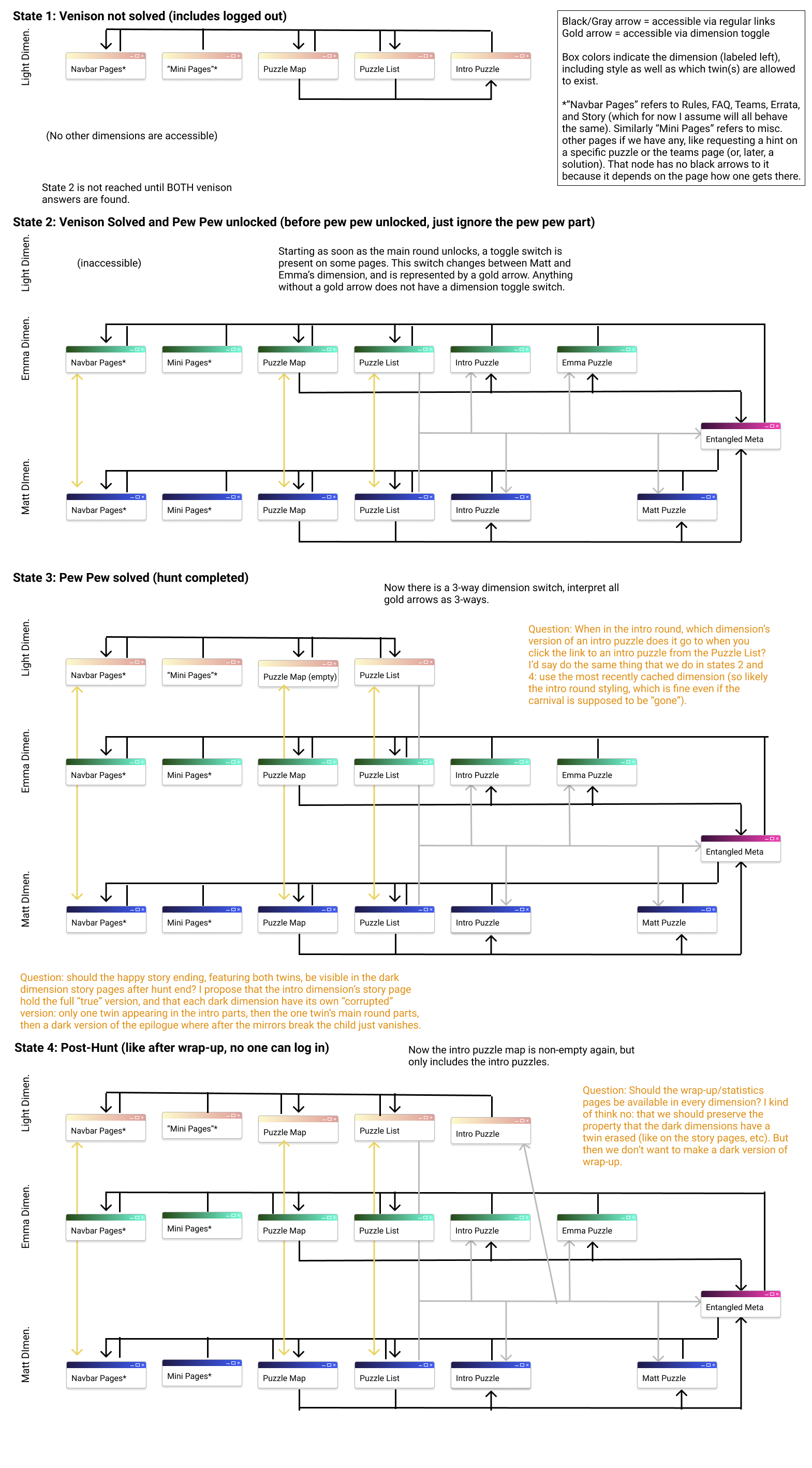

Website

The website skeleton is essentially the same as last year, with a mix of Python, Django, NextJS, and React. This year, we added more infrastructure for websockets, which we used to implement solve notifications, as well as some of the interactive puzzles.

Art mockups for the website were done in Figma, which we used to diagram the site's appearance, as well as how pages should link to each other. The linking behavior got pretty complex as we determined how the site should work depending on solve progress.

Three dimensions essentially meant 3x the CSS and art assets, but the story, art, and web teams wanted each part to complement each other and form a Gesamtkunstwerk. One major pain point was the sheer amount of art we had to upload to the site. To reduce loading times on the story and map pages, we tried to downscale, optimize, and lazy-load images. While we crafted scripts to automate the initial art, many last-minute changes had to be manually uploaded (some even during the hunt!). If we run another image-heavy hunt, we will probably invest in external static hosting and automated scripts for resizing and optimizing images.

The remaining website changes were in our backend, most notably around copy-to-clipboard, email, and hints. To support images in copy-to-clipboard, we changed our backend to use WhiteNoise, serving images from random URLs rather than gating them behind puzzle unlock. We rewrote our email system to hook into the Django backend, allowing us to reply to emails as if they were hint requests, and modified our hint system to support follow-up hint requests (a major request from last year). Emails were sent from a self-hosted email server, and bulk email announcements were built in directly to our site, to avoid issues we hit last year.

One unexpected result of supporting hint follow-ups was many teams sending us thank you notes via the hint system. Thanks for the kind messages!

To ensure that the site is still accessible once we shut down our servers, we’re looking into Pyodide, web workers, and IndexedDB to allow running Python code in the browser, effectively leveraging all of our server logic for interactive puzzles.

Discord

We once again used Discord to organize team meetings, set up testsolves, and receive hunt alerts. New this year were author writing and testsolve channels. Authors often wanted a faster communication channel than Puzzlord, so we set up Discord bots that let us quickly create private channels for puzzle discussion and testsolving. This was especially useful for coordinating batch testsolves. On the other hand, private channels for individual puzzles decentralized discussions and rendered Puzzlord less accurate and useful.

Website Art

When we started working on the hunt, we realized we had a lot more people interested in contributing art than we did last year. This allowed us to set more ambitious art goals, which was great since we wanted the website to exist across three dimensions! It also required additional work to coordinate art tasks and keep everything visually cohesive.

Site Design

For the site, our goals were to create a carnival aesthetic that didn’t feel too clichéd or cheesy, and to maintain some consistency across dimensions, while also having a dramatic shift in tone between Acts I and II. We pulled inspiration from old flyers for carnivals, magicians, and state fairs for their use of color and type-driven designs, and we tried to reinterpret common carnival shorthand (like red and white stripes) to be a bit more subtle and refined.

For the light dimension, we really leaned into this and focused on a sun-soaked palette with a more overt carnival feel. Moving into the dark dimensions, media like Erin Morgenstern’s The Night Circus and more magical aesthetics were definitely in mind. The mouse circus from Coraline was an initial inspiration, but we wanted ours to feel more mysterious than evil—rich jewel tones and golds both helped build that feeling, and were also picked to be (loosely) photo-negatives of our light dimension palette to emphasize the reversal. To make sure the dimensions all felt cohesive-yet-different, we tried to maintain some common elements throughout—stars from the light background carried into the dark backgrounds in a different form, and swooping puzzle titles and thin lines can be seen throughout the site. On top of that, we wanted to add small magical touches where we could—the icon shine effect, playing card toggle, and removing Matt and Emma’s names in the opposite dimensions are just a few of those little surprises.

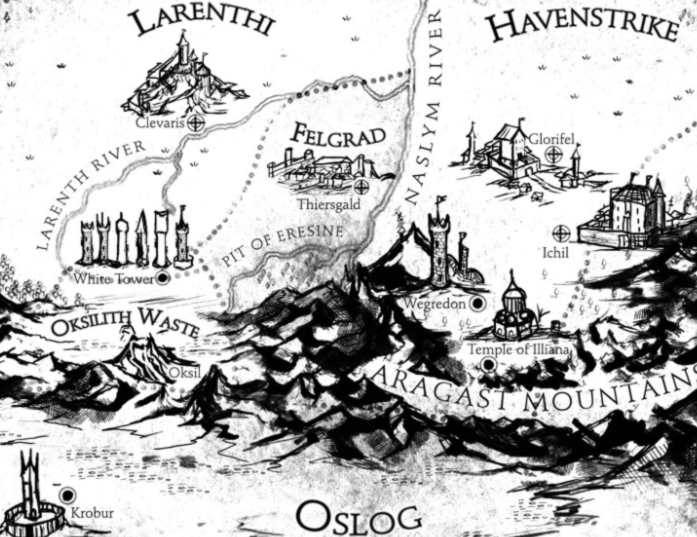

Puzzle Map

We drew inspiration from storybook maps, ouija boards, and tarot cards, since these are culturally recognizable images with magical or supernatural associations. Style-wise, this meant using flat blocks of color and then hatching to create shading or texture. The whole map was intended to look like it was hand-drawn on paper.

We brainstormed ways to add a magical touch to the dark dimensions while keeping the paper aesthetic. We considered using details that looked like origami or collage, or injecting “mistakes” like bleeding ink. In the end, we added a simple gold embossing that would shine in a “light” controlled by the cursor, since this was elegant and looked good with both blue and teal color palettes.

We spent a long time experimenting with color palettes for solved puzzle icons in the dark dimensions. We wanted the map to feel consistent with the mostly monochromatic website, but also thought that color could make it more visually exciting. For some of our experiments we considered using matching patterns to mark entangled puzzle pairs rather than the gold shapes we ultimately used.

We ended up getting the best of both worlds: we made icons be monochromatic, but extended the gold shine effect to make the cursor’s “light” reveal their vibrant colors. This way the map would generally match the dimension’s color palette, but solvers could enjoy playing with the fun blasts of color.

One downside of this was that each puzzle needed 4 to 7 icon images—unsolved and solved icons in each of the intro, Matt, and Emma dimensions, as well as multiple “shine” overlays. So it was a lot of work for the artists!

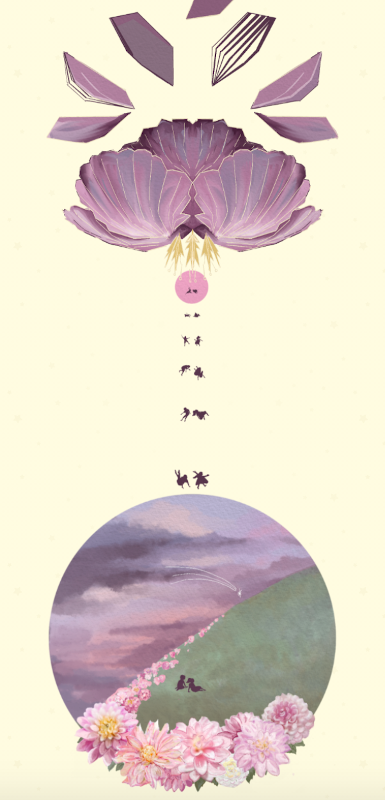

Story Illustrations

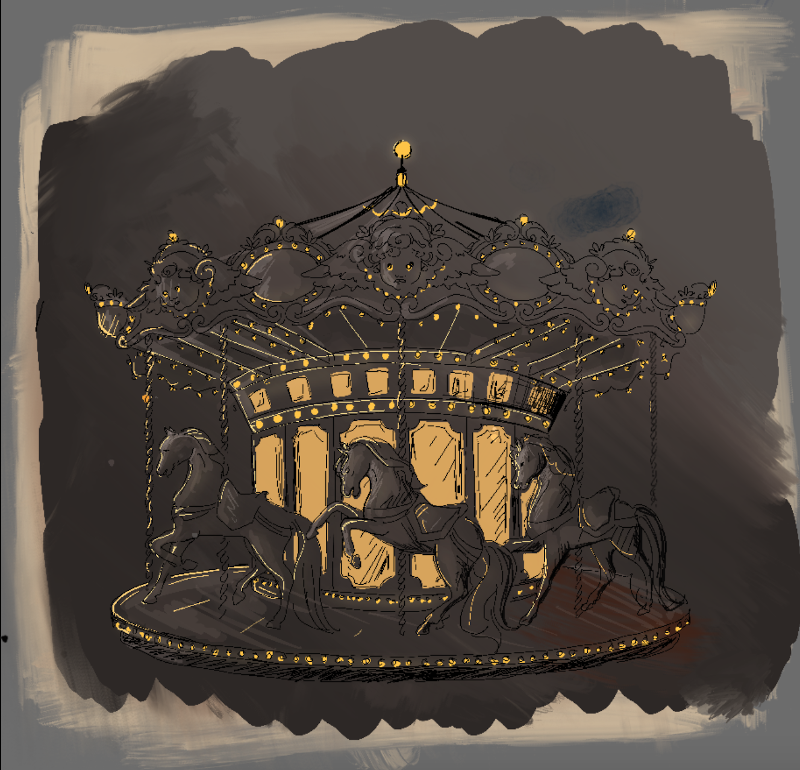

By RachelThe dark dimensions

I knew as soon as we had decided on a carnival theme that I wanted to lean more into a quiet, subdued aesthetic than the louder, colorful one that I typically associate with spooky carnivals.

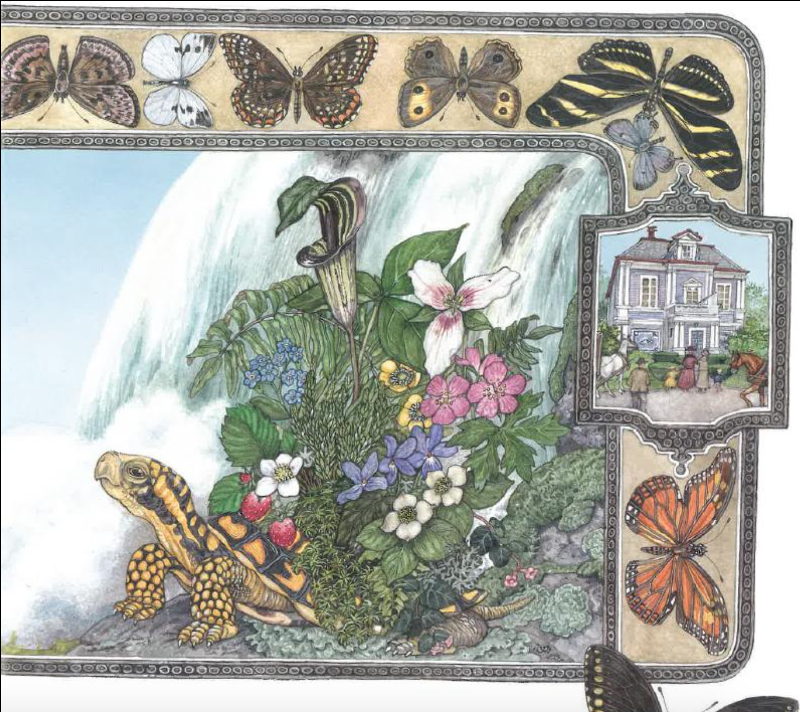

A week or two into the development process, I decided it would be nice to have story endcaps for all of our puzzles in addition to the meta art. I took a page here from old children’s books, particularly Jan Brett’s, which often included lovely insets of scenes secondary to that of the main art.

To test it out, I drew three sample endcaps (for what were, at the time, three of our potential metas). These borrowed from the design of foil stamped books - thin, delicate gold lines on darker plain backgrounds.

We ended up just taking this aesthetic and running with it for everything! Matt became blue, Emma became green, and we used the warmer purple for the Hall of Mirrors reunion. Gold became our accent color across the story, map, and website.

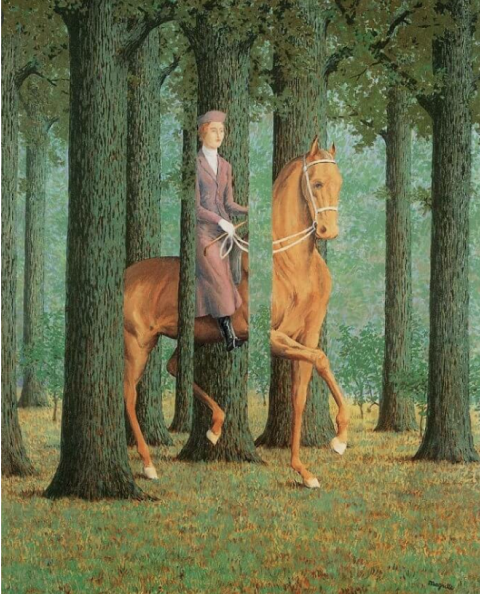

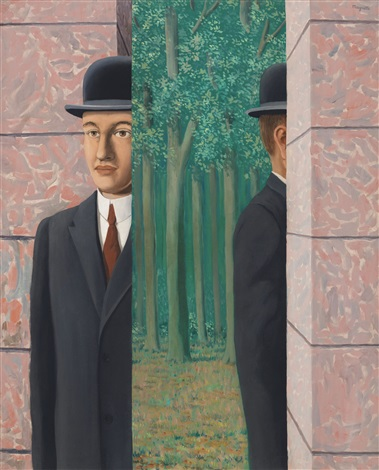

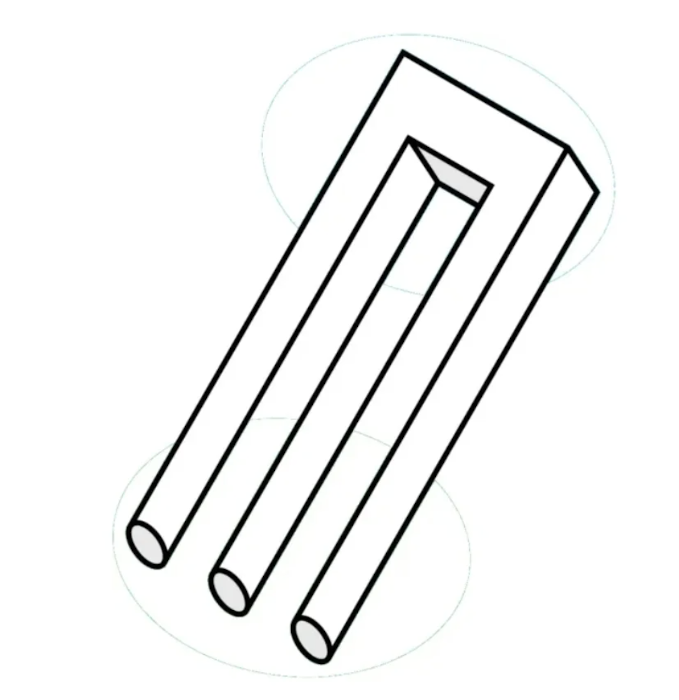

For the actual design of the story art, I wanted to go more abstract - a little ‘dream sequence’, with a current of magic creating an underlying oddness to it all. The twins are sent into these strange dimensions by magic, and so I went for inspiration to classic illusions and the masters of magic on the page that are Escher and Magritte.

I intended for there to be post-Act II meta art that would illustrate the meta answers, but decided against this due to flow. All other large art popups appear in conjunction with a puzzle-related unlock (the unlock of the corresponding meta or, in the case of the post-Magical/Entrancement popups, the unlock of the start of Act II). I worried that it would be disappointing to solve a meta and get an art popup that stood alone. These were the original sketches:

Home

I needed a look for the light dimension that would contrast well with the dark dimensions - this meant something friendly, and inviting. I have always admired how Ghibli movies, and in particular Spirited Away, start out in a world like this - warm blue skies, waves of winds in the grasses, pillowy, billowy clouds that make it far too easy to slip and fall into a daydream - it all feels very magical even without being explicitly so.

The transition, then, between this soft warm home world and the sharper, colder dimensions is all the more dramatic.

My original plan was to have the return to the home world, at the very end of the story, be identical to the start (sans carnival). I think it’s very romantic, how Ghibli does it - we are forevermore changed by our experiences, but the world around us has remained the same. However, I really couldn’t resist using our finale purple, since it’s such a gorgeous color that otherwise shows up only on the final meta page.

Anyway, our world may not have changed, but the way that we see it certainly has, no?

Miscellaneous motifs & other inspiration

My full inspiration board by the end of the hunt. Special thanks to Margaret for the photo of the dog and the Skycrab Holders for their really cute team picture.

Click for full-size image.

Stats

The full guess log is available here. Let us know if you do anything interesting with it!

Other hunt stats, including the Bigboard of all team solves, can be browsed here.

There were 2401 hints during hunt, going up to 3556 hints if you count follow-ups.

Future of Teammate Hunt

Once again, we're not sure what our plans are for future Teammate Hunts yet. We've been pretty busy with this one! We plan to keep the hunt available, but are still investigating how to archive the interactive components, especially parts that cross puzzle boundaries.

If you're interested in solving more puzzles, Puzzle Boat 8 starts October 23, Silph Puzzle Hunt starts December 4, and Puzzle Rojak starts December 18. See Puzzle Hunt Calendar for more events. If you're looking to introduce new solvers to puzzles, check out YukiHunt for a set of fun tutorial puzzles.

Credits

- Hunt Director: Edgar Chen

- Puzzle Editor-in-Chief: Patrick Xia

- Puzzle Editors: Edgar Chen, Katie Dunn, Jacqui Fashimpaur, David Hashe, Andrew He, Bryan Lee, Nishant Pappireddi, Alex Pei, Brian Shimanuki, Liam Thomas, Ivan Wang, Rachel Wei, Patrick Xia, Samuel Yeom

- Puzzle Authors: Herman Chau, Edgar Chen, Katie Dunn, Jacqui Fashimpaur, Alex Gotsis, David Hashe, Andrew He, Alex Irpan, Dominick Joo, Bryan Lee, Austin Lei, Tom Panenko, Nishant Pappireddi, Alex Pei, Christopher Reyes, Joanna Sands, Margaret Sands, Brian Shimanuki, Steven Silverman, Liam Thomas, Olga Vinogradova, Ivan Wang, Rachel Wei, Catherine Wu, Patrick Xia, Justin Yokota

- Puzzle Post-prodders: Herman Chau, Edgar Chen, Jacqui Fashimpaur, Alex Gotsis, Andrew He, Alex Irpan, Dominick Joo, Tom Panenko, Joanna Sands, Brian Shimanuki, Patrick Xia, Ivan Wang, Catherine Wu

- Puzzle Fact-checkers: Taichi Akiyama, Herman Chau, Edgar Chen, Evan Chen, Alex Gotsis, Andrew He, Bryan Lee, Tracey Lin, Yuru Niu (not_coal), Nishant Pappireddi, Alex Pei, Christopher Reyes, Margaret Sands, Steven Silverman, Kevin Sun, Ariel Uy, Patrick Xia, Catherine Wu

- Web Lead: Ivan Wang

- Web and Infra: Alex Irpan, Brian Shimanuki, Ivan Wang

- Art/Story Lead: Jacqui Fashimpaur

- Web/Graphic Design: Christopher Reyes

- Story Art: Rachel Wei

- Narrative Design: Liam Thomas

- Puzzle Map Art: Herman Chau, Katie Dunn, Audrey Fashimpaur, Jacqui Fashimpaur, Olga Vinogradova

- Additional Testsolvers:

- First testsolve: Taichi Akiyama, Moya Chen, Zhan Xiong Chin, Victor Hu, Cameron M., Bhavik Mehta, Sophie Mori, Rahul Sridhar, Ariel Uy, Vickie Wang, Jakob Weisblat, jake, gacha

- Second testsolve: Evan Chen, Harrison Ho, Lennart Jansson, Dominick Joo, Kevin Li, Tracey Lin, Aaron Lin, Max Murin, Yuru Niu, Cami Ramirez-Arau, Kevin Sun, Daniel Whatley, Moor Xu

- Act I testsolve: clarise 6, Samuel Chen, Claire G, Kaz, Karen M, rÿàń, lohith tummala

Appendix

Appendix A: Fun Stuff

Profile Pictures

18 teams tried uploading profile pictures that weren't JPEGs or PNGs.

All team profile pictures can be viewed here. Warning: there are a lot of images, this may load pretty slowly!

Superlatives

Green Thumb (most waters in Remember to Hydrate!)

- The Waffle Machine basically exploded. with 247 waters as of October 17, 18:41 EDT (we assume they're still going)

Cactus Award (fewest waters while solving Remember to Hydrate!)

- Dank Poets Society and The C@r@line Syzygy with 4 waters

Quizbowl Champions

- Novelties, Exhibitions, Spectacles! for solving Oxford Fiesta with requesting only 19 clues over 14 questions.

- LMW for solving 7 questions with 0 clues.

Most bloodthirsty team

- kirikirikiring labyrinthers(Previously StriketeamE) for killing 269 of their teammates.

Mystery Manor

4 teams accused Gull (themselves) of murder; 3 teams accused Duck (the constable).

Total guesses per suspect, including duplicates from the same team.

| Suspect | Count |

|---|---|

| Robin | 156 |

| Jay | 50 |

| Wren | 43 |

| Swift | 39 |

| Finch | 30 |

| Crow | 10 |

| Duck | 6 |

| Gull | 4 |

| Other | 9 |

Miscellaneous Stats

0 teams entered the Konami code before hunt start.7 teams sent us a photo or video of cutting out a ring and tossing it onto a bottle.

11 teams sent in concerned emails about Meta-Eval Times

- 9 emails asking if there was a bug

- 3 follow-up emails asking "are you sure there's no bug?" after we confirmed the puzzle was not broken.

- 2 emails on the day of the final Smash direct saying there was errata because we didn't include Sora, and then this story from KaBaL.

- "In Pin the Tail, after seeing ROOM NAME, we tried to extract like so: since the teammate in the Cafeteria cares about Scrabble scores, we extract the Scrabble score of CAFETERIA. Unfortunately, this broke because the teammate in STORAGE needs a Smash character with a letter inserted.

"One day later, Sora was announced as Smash DLC."

- "In Pin the Tail, after seeing ROOM NAME, we tried to extract like so: since the teammate in the Cafeteria cares about Scrabble scores, we extract the Scrabble score of CAFETERIA. Unfortunately, this broke because the teammate in STORAGE needs a Smash character with a letter inserted.

Appendix B: Guesses We Enjoyed

All That's Left To Do is Extract

- THERIGHTANSWER - 90 teams (this was the "last step" of Have You Tried)

Solving Pumpkin Patch with NO Given Pumpkins?!

- CRACKINGTHECRYPTIDS - URLy Onset Stupidity

DC Meet and Greet

- MARKROBERSAIDTHERINGBOTTLEGAMEISASCAM - Time Vultures

Global Gift Shop

- DSTISUTTERCRAPPLEASEDELETEIT - Mobius Strippers

Unhealthy

- OHMYGODWEARETHEHEALTHINSPECTOR - :praytrick:

- 🦁🦁🦁 Galactic LionTAMErs 🦁🦁🦁 , barbara kingsolver, The Wombats, and Tweleve Pack were dirty hackers. We congratulate them on their ingenuity.

Remember to Hydrate!

- DOESTHISPUZZLEEVENHAVEANANSWERORISITJUSTAREMINDERTOHYDRATE - Novelties, Exhibitions, Spectacles!

- MANORMANITSUREISAMYSTERYWHATISCAUSINGTHESECHANGESTOTHEPLANTINTHISMANNER - fires[pi]nning

- OHNOWHYDIDWEUNLOCKTHISPUZZLEJUSTBEFOREMIDNIGHT - Time Vultures

The Mystical Plaza

- MAKEHIMTHINKHESBESTEDYOUBYFRAMINGYOURTWINBROTHERFORHISOWNMURDERBUTINREALITYHEISSTILLALIVETHANKSTOAMACHINEBUILTBYNIKOLATESLABUTYOUAREALSOSTILLALIVEBECAUSEYOURTWINDIEDNOTYOUANDTHENYOUVISITHIMAFTERWARDSANDREVEALYOURSECRETTOHIMBEFOREJUSTSHOOTINGHIMWITHAGUN - THE SHOW'S BACK INTOWN

The Sword Swallowers

- GOEATSH*T - 7 teams

- GOEATSWORDFISH - 3 teams

- WEVEALREADYNEEDLESSLYEATENTHESWORDSWALLOWERSWHATELSECOULDYOUWANTHIMTOEAT - Needlessly Eating Sword-swallowers

- (They solved the puzzle 8 seconds later)

Hall of Mirrors

- SEVENMINUTESINHEAVEN - Duck Soup

- IMREALLYGLADITWASN - Duck Soup

- TTHATBECAUSETHATWOULDBEPRETTYWEIRD - Duck Soup

- APROJECTIONDEVICE - 17th Ring Toss Try's the Charm

General

TWOBROKEGIRLS was submitted 7 times as a backsolve attempt, which normally wouldn't be notable, but 2brokegirlz the team got the final solve of the hunt (Oxford Fiesta, 14 seconds before hunt end).

There were 45 guesses of TEAMMATE across the entire hunt.

Appendix C: Fan Art / Memes

Appendix D: Plagiarism

We loved solving all the submitted puzzles during the hunt! We would like to highlight a few submissions we especially enjoyed, and have collected all the puzzles that we have permission to share here.

Appendix E: Q&A

The Q&A can be found here